Building a Multi-Agent System for Smarter Advertising: A Step-by-Step Guide

Introduction

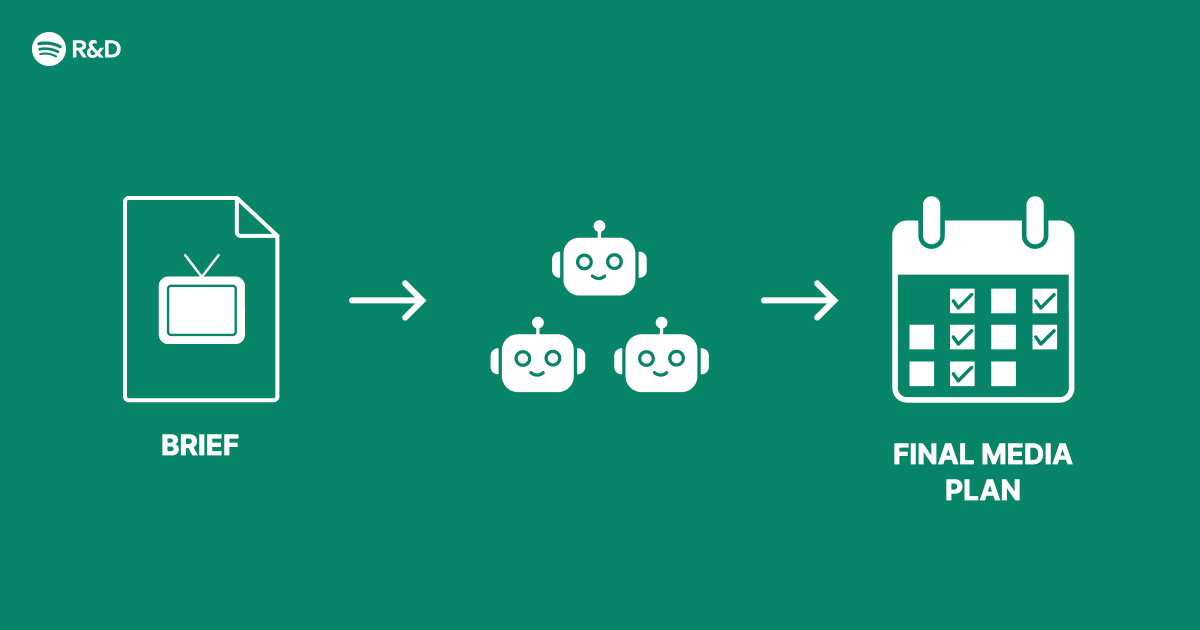

Advertising at scale demands intelligent decision-making across multiple dimensions—whom to target, what creative to show, how much to bid, and when to serve an ad. A single monolithic model often struggles to balance these competing objectives. Inspired by Spotify's engineering approach, a multi-agent architecture decomposes the advertising problem into specialized agents that collaborate to deliver smarter, more efficient campaigns. This guide walks you through designing and implementing such a system, from defining agent roles to orchestrating their interactions.

What You Need

- Data pipeline: Streaming platform (e.g., Apache Kafka) and storage (e.g., S3, BigQuery) for real-time user events and ad performance logs.

- Machine learning framework: TensorFlow or PyTorch for training agents; reinforcement learning libraries like RLlib or custom simulators.

- Orchestrator service: A lightweight microservice (Python/Go) to manage agent communication and state.

- Experiment platform: A/B testing infrastructure (e.g., LaunchDarkly) to validate agent changes.

- Monitoring stack: Prometheus + Grafana for real-time metrics (CTR, CVR, revenue, latency).

- Team: ML engineers, data engineers, and product managers with domain expertise in advertising.

Step-by-Step Guide

Step 1: Define Agent Responsibilities

Break down the advertising workflow into distinct subproblems. Typical agents include:

- Targeting Agent: Predicts user intent and selects audience segments.

- Creative Agent: Chooses or generates ad copy and images optimized for engagement.

- Bidding Agent: Determines cost-per-click or cost-per-impression bids in real time.

- Timing Agent: Decides the optimal moment to serve an ad based on user session context.

For each agent, define its input (features), output (decision), and success metric (e.g., CTR for Creative, auction win rate for Bidding).

Step 2: Design Agent Communication Protocols

Agents must share information without creating tight coupling. Use a shared context store (e.g., Redis or a lightweight graph database) where any agent can read/write structured data about the current user and auction. For example:

- The Targeting Agent writes a user segment ID.

- The Creative Agent reads that ID and writes a chosen creative hash.

- The Bidding Agent reads both and writes a bid price.

Define an interface contract (protobuf or JSON schema) to ensure compatibility.

Step 3: Train Agents Separately

Train each agent using historical data or a simulation environment. Use supervised learning where labels exist (e.g., past winning bids) or reinforcement learning when exploring new strategies. For the Bidding Agent, a common approach is to model the auction as a Markov decision process and train with policy gradients. For the Creative Agent, use multi-armed bandits to test different creatives and learn which ones perform best per segment.

Ensure each agent’s training data includes the outputs of other agents as features, so they learn to adapt to the system’s collective behavior. For instance, the Bidding Agent should see the segment and creative chosen for the current impression.

Step 4: Implement the Orchestrator

The orchestrator manages the execution order of agents during a single ad request. A typical flow:

- Receive impression request with user context (device, location, session history).

- Call Targeting Agent → returns segment ID.

- Call Creative Agent → returns creative ID.

- Call Timing Agent → returns delay or immediate flag.

- Call Bidding Agent → returns bid amount.

- Return ad decision to the ad server.

Include timeout and fallback logic: if an agent fails, the orchestrator uses a default rule-based backup to avoid dropping auctions.

Step 5: Add Feedback Loops

After the ad is served or not (auction lost), collect outcome signals (click, conversion, no action). Feed these back to each agent as rewards or labels. For the Bidding Agent, reward could be revenue minus cost; for the Creative Agent, reward could be CTR or engagement metric. Store outcomes in the shared context store so agents can read them asynchronously and retrain incrementally.

Step 6: Run A/B Tests

Compare the multi-agent system against your existing monolithic baseline. Use a holdout of traffic (e.g., 10%) to measure key business metrics: eCPM, revenue per user, latency, and model freshness. Because multiple agents interact, test changes in isolation: for example, replace only the Bidding Agent with a new version while keeping others constant.

Step 7: Monitor and Optimize

Track per-agent metrics such as:

- Targeting Agent: segment hit rate, conversion rate per segment.

- Creative Agent: creative rotation frequency, fatigue detection.

- Bidding Agent: win rate, bid distribution.

- Timing Agent: impression-to-click delay, viewability.

Set up alerts for drift or performance degradation. Periodically retrain all agents with fresh data, and consider auto-tuning hyperparameters via Bayesian optimization.

Step 8: Scale and Iterate

As the system grows, you may add new agents (e.g., budget pacing, fraud detection). Package each agent as a separate microservice with its own deployment pipeline. Use container orchestration (Kubernetes) to scale individual agents based on load. Maintain a shared simulation environment for integration testing before full rollout.

Tips for Success

- Start simple: Implement only two agents (e.g., Targeting + Bidding) before adding more. This reduces complexity and lets you validate the architecture.

- Use decoupled communication: Prefer asynchronous message queues (e.g., RabbitMQ) over synchronous REST calls to avoid cascading failures.

- Experiment carefully: Because agents influence each other, a change in one can affect the others’ effectiveness. Run multivariate tests to understand interactions.

- Invest in logging: Log every agent decision and outcome to debug unexpected behaviors.

- Iterate on agent boundaries: If two agents often conflict (e.g., Creative and Bidding both trying to trade off engagement vs. cost), consider merging them into a single multi-objective agent.

- Involve domain experts: Advertisers and campaign managers can provide insights on which decisions should be made by rule-based systems vs. learned agents.